[Listen to the podcast]

[vew a PDF of this article]

The full version of the article as published in the July 2012 issue of Sky & Telescope is reprinted with the permission of the magazine. The original podcast with some additional material may be found on the Sky & Telescope website at http://www.skyandtelescope.com/skytel/

beyondthepage/Interview-with-Joel-Primack-152323655.html .

Universe on Fast Forward

Supercomputer modeling is transforming cosmology from an observational science into an experimental science

By JOEL R. PRIMACK and TRUDY E. BELL / July 2012

![COSMIC WEB: The Bolshoi simulation models the evolution of dark matter, which is responsible for the large-scale structure of the universe. Here, snapshots from the simulation show the dark matter distribution at 500 million and 2.2 billion years [top] and 6 billion and 13.7 billion years [bottom] after the big bang. These images are 50-million-light-year-thick slices of a cube of simulated universe that today would measure roughly 1 billion light-years on a side and encompass about 100 galaxy clusters.](images/S&Timages/ST0.jpg) Sources: STEFAN GOTTLÖBER / LEIBNIZ-INSTITUT FÜR ASTROPHYSIK POTSDAM

Sources: STEFAN GOTTLÖBER / LEIBNIZ-INSTITUT FÜR ASTROPHYSIK POTSDAMEVOLVING UNIVERSE left to right: These frames from the Bolshoi simulation depict the universe at redshifts of 10, 3, 1, and 0, which correspond to cosmic ages of 490 million years, 2.2 billion years, 6 billion years, and 13.7 billion years (today). The bright areas have high densities of dark matter. As the far left frame shows, Bolshoi's early universe has only a modest degree of lumpiness in the distribution of matter. But the subsequent frames demonstrate how gravity, acting over billions of years, gathers matter into long filaments that surround immense voids. Galaxies are concentrated along the filaments, clusters at the nodes.

Old-fashioned star projectors show the changing night sky, acquainting planetarium visitors with constellations and planetary motions. Modern digital projectors can also show the locations of distant galaxies, and can even reveal their three- dimensional distribution in the cosmos by allowing the viewer to fly through intergalactic space.

But that's not nearly good enough for cosmologists — scientists who work to understand the composition, structure, and evolution of the entire universe. With the enormous power of the fastest supercomputers in the world, we now can model the three-dimensional evolution of the universe, from shortly after the Big Bang all the way to the present.

The computational power of supercomputers is literally transforming cosmology into an experimental science. Through supercomputer simulations, astronomers now can change the physical assumptions and see how the predictions change when, say, stars explode or galaxies collide. Simulations — along with stunningly beautiful visualizations of the results — let astronomers explore the universe in unprecedented detail, revealing insights not previously accessible. Even more powerfully, simulations allow astronomers to run an astrophysical process forward in sped-up time, and to make predictions that can be tested by observations of the real universe. In short, high-performance simulation now has joined theory, observation, and laboratory experimentation as another pillar of the modern scientific method.

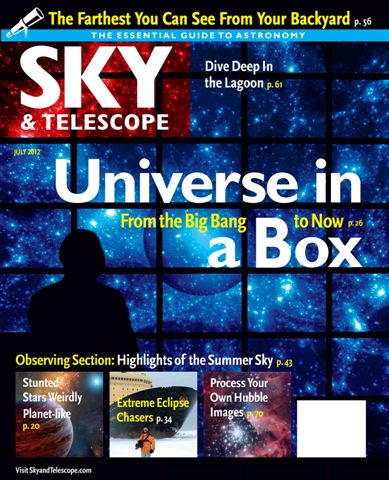

Sources: NASA/WMAP TEAM

INITIAL CONDITIONS According to standard cosmological models, the early universe experienced a period of inflation. This rapid growth spurt left behind slight variations in the density of matter. This lumpiness shows up as subtle warm (red) and cool (blue) spots in the cosmic microwave background (CMB), as revealed here in the seven-year dataset from NASA's WMAP satel- lite. Bolshoi and other cosmological simulations show how the CMB's higher-density regions served as "seeds" for the emergence and evolution of cosmic structure such as clusters of galaxies.

The latest and best supercomputer model of the evolu- tion of the universe is called "Bolshoi," after the Russian word for "big" with the connotation of "great" or "grand." The Bolshoi simulation has allowed us to test the agreement between our modern cosmological theory and the observed structure of the universe, both nearby and very far away. As we look farther out in space with our best telescopes, we are looking further back in time. As far as we can tell, the Bolshoi simulation's predictions agree perfectly with the best observations.

NASA/Ames Research center

DIGITAL WORKHORSE A large team of scientists performed the Bolshoi simulation on NASA's Pleiades supercomputer at NASA's Ames Research Center near San Jose, California. Bolshoi ran on 13,824 processors, making it roughly equivalent to 7,000 dual-processor Apple MacBook Air laptops. It used 12 terabytes of random-access memory, which is more than 1,000 times the RAM of high-end laptops. The simulation took the equivalent of 18 days over several months in 2009.

Although not the first project to model the evolution of the universe, Bolshoi is better than previous cosmological simulations, including the path-breaking 2005 Millennium simulation, for three reasons: the improved accuracy and precision of its input data from observational measurements, the power and speed of NASA's Pleiades supercomputer and the computer codes used, and the detailed analyses of the Bolshoi outputs that are being made available to the worldwide astronomical community.

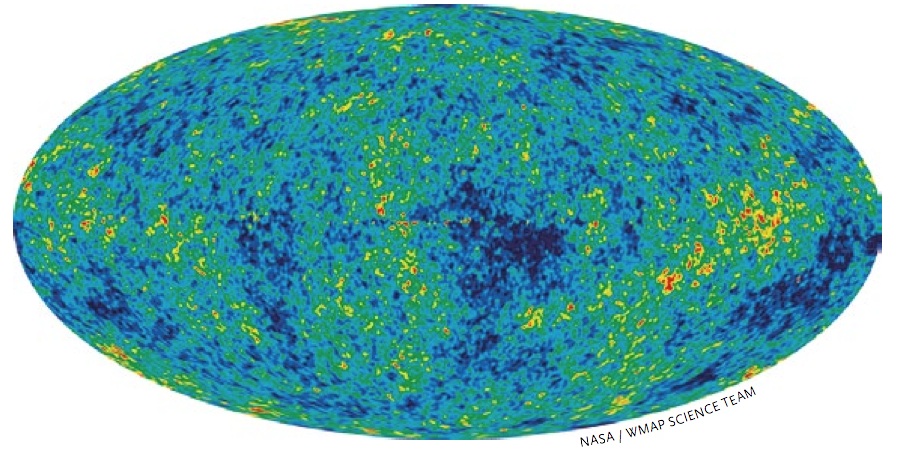

Sources: N. MCCURDY (UC-HIPACC) / R. KAEHLER, R. WECHSLER (STANFORD U.) / M. BUSHA (U. ZURICH) / SDSS

REALITY CHECK As this comparison shows, Bolshoi's predicted distribution of dark matter halos closely matches the observed distribution of visible galaxies in the Sloan Digital Sky Survey. Although the two images differ in their details, they are virtually identical statistically, which gives scientists a valid reason for optimism that Bolshoi is accurately portraying how the universe actually evolved. Each wedge spans a quarter of the way around the sky and looks out to about 2.8 billion light-years.

Well-Tested Theories

As with simple or complex computer programs, the quality of the results output by a cosmological simulation depend both on the accuracy of the observational data used as input and the accuracy of the laws of physics used to specify the computational process. The laws of physics are the rules, if you will.

For Bolshoi, the equations of Albert Einstein's general theory of relativity are the rules for letting the supercomputer simulation unfold its model universe forward in time. This well-tested theory describes the behavior of space, time, and gravitation. Bolshoi is also based on the widely accepted and well-established modern theoretical framework for understanding the formation of the universe's large-scale structure: the Lambda Cold Dark Matter cosmology (abbreviated ΛCDM), based on the Cold Dark Matter theory devised by coauthor Primack and his colleagues in 1983-84.

In simplest terms, ΛCDM posits that right after the Big Bang, the universe rapidly inflated in size. This cosmic inflation vastly magnified tiny, random fluctuations in energy that existed at every point in space. These quantum fluctuations left matter and energy unevenly distributed throughout the universe. Assuming that the dark matter is cold — that is, that in the early universe it consisted of particles that moved much slower than the speed of light — then gravity caused high-density regions to coalesce into halos (clouds) of dark matter and ordinary atomic matter (which physicists call baryonic matter, because most of its mass comes from baryons — protons and neutrons in atomic nuclei). And these halos ulti- mately gave rise to galaxies and clusters of galaxies.

The Greek letter Lambda (Λ) is the symbol that Einstein gave to the cosmological constant, a possible property of space itself that represents a repulsive force that he thought could compensate for the attraction of gravity and permit a static universe. Einstein later called the cosmological constant his biggest blunder after Edwin Hubble showed that the universe is actually expanding. But now the evidence is extremely strong that there really is a cosmological constant — or something very much like it called dark energy. The Bolshoi simulation assumes that the dark energy is just Einstein's cosmological constant.

The ΛCDM cosmology also predicts that large-scale structure in the universe grows hierarchically through gravitation: repeated mergers of smaller dark matter halos create bigger ones. The principal purpose of the Bolshoi simulation is to compute and model the evolution of dark matter halos — thereby rendering the invisible visible for astronomers to study, and to predict visible structures that astronomers could then seek to observe. We also hope that the Bolshoi simulation may shed light on the exact nature of dark matter and dark energy, which remain unknown.

Where the Data Come From

So the Bolshoi cosmological simulation unfolds from Lambda Cold Dark Matter cosmology and general relativity. The observational input data are the most accurate known: the cosmological parameters measured from the heat radiation remaining from the Big Bang and the observed distribution of galaxies and galaxy clusters.

The cosmic microwave background radiation was dis- covered by accident in 1964 by Bell Laboratories physicists Arno Penzias and Robert Wilson. Princeton University cosmologists Robert Dicke, James Peebles, and David Wilkinson quickly realized that the background hiss detected by Penzias and Wilson was the cosmic microwave background that George Gamow and his colleagues had predicted in 1948 would be left over after the universe had cooled over billions of years from a hot Big Bang.

ΛCDM predicts how unevenly matter and energy had to be distributed so that a slightly lumpy early universe could form galaxies and clusters of galaxies, and even larger cosmic structures such as superclusters and enor- mous voids where few galaxies are found. In 1989 NASA launched the Cosmic Background Explorer (COBE) satel- lite to see if the cosmic microwave background indeed showed the slight differences in temperature that models of such a lumpy early universe predicted. In 1992 the COBE team announced that it had indeed seen the predicted tiny "anisotropy" (unevenness) in the distribution of temperatures in different directions. Six years later,

two independent teams reported observations of Type Ia supernovae showing that the universe's expansion has been accelerating for the past several billion years. That acceleration suggests the presence of dark energy.

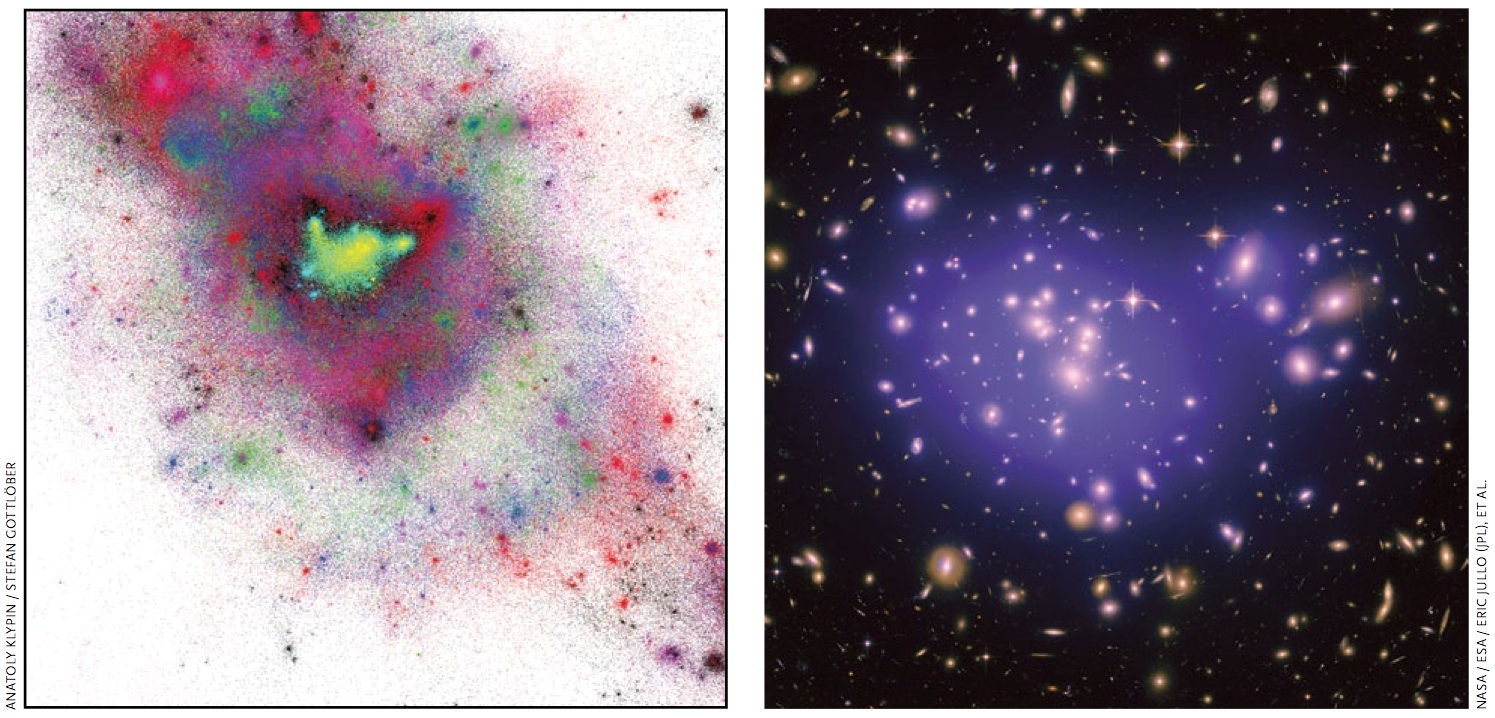

LEFT: ANATOLY KLYPIN / STEFAN GOTTLÖBER - RIGH:NASA / ESA / ERIC JULLO (JPL), ET AL.

CLUSTERS SIMULATED AND REAL Left: This multicolored, ultrathin slice of the Bolshoi simulation is about 23 million light-years across and 7 million light-years thick. The close-up view shows complex structure of dark matter in and around a cluster of galaxies. Yellow dots denote were dark matter is moving fastest (exceeding 1,000 km/second), black dots the slowest (50 km/second). Right: This composite Hubble Space Telescope image shows the inner region of the galaxy cluster Abell 1689, 2.2 billion light-years away in Virgo. By measuring how the cluster has gravitationally lensed the light of background galaxies, astronomers can roughly map the distribution of dark matter, which is shaded purple. The right image is comparable in scale to the central bright region of the left image.

As the observational evidence has steadily improved, it has also become increasingly consistent. Now all the different methods of measurement show that about 73% of the cosmic density today is dark energy and about 22% is cold dark matter. Only about 5% is ordinary baryonic matter — and only about 0.5% is visible as stars or gas.

NASA's Wilkinson Microwave Anisotropy Probe (WMAP), launched in 2001, has been crucial in these precision measurements. WMAP and other observations definitively determined the age of the universe (to within 1% accuracy) as 13.7 billion years. More important, over the past decade, WMAP has produced high-resolution maps, meticulously plotting in fine detail the temperature and other characteristics of the cosmic microwave back- ground across the entire sky. Analyses of the tiny variations in this primordial radiation have revealed a wealth of information about the universe's history, structure, and composition. The slight temperature differences in the microwave background correspond to regions in the early universe that were slightly warmer and cooler, and thus less dense and more dense. As the early universe expanded, gravity vastly magnified the effects of those density variations, giving rise to the now-famous cosmic web — long filaments of galaxies surrounding immense voids that are seen clearly in large-scale galaxy surveys such as the Sloan Digital Sky Survey.

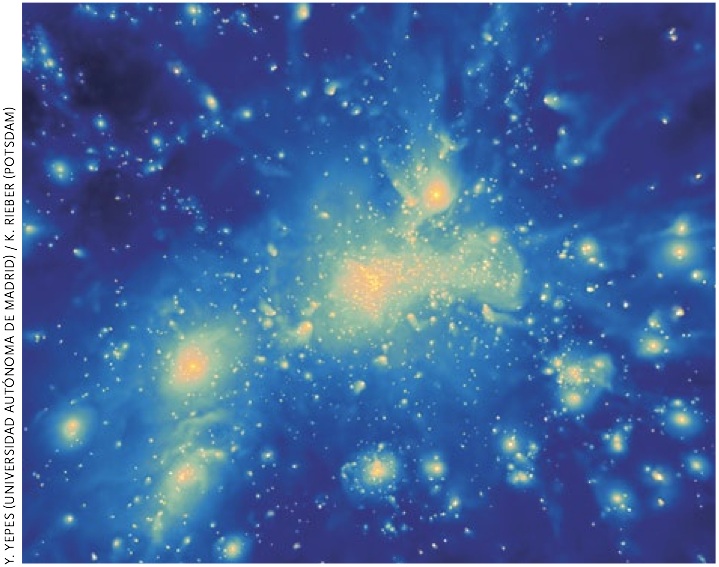

Y. YEPES (UNIVERSIDAD AUTÓNOMA DE MADRID) / K. RIEBER (POTSDAM)

Y. YEPES (UNIVERSIDAD AUTÓNOMA DE MADRID) / K. RIEBER (POTSDAM)GAS DENSITY This frame shows gas distribution in a massive cluster from BigBolshoi, using the same initial conditions but treating the baryons separately from dark matter. For more Bol- shoi images and videos, visit http://hipacc.ucsc.edu/Bolshoi.

In 2008 the WMAP team released its WMAP5 data set, a five-year run of cumulative measurements of the detailed structure of the microwave background combined with the most reliable ground-based observations. The Bolshoi simulation is based on the cosmological parameters of WMAP5. Moreover, WMAP5 is completely consis- tent with a later data set released in 2010 called WMAP7, which is based on cumulative results of WMAP's first seven years, plus additional ground-based observations.

Universe in a Box

Just as many sciences seeking to understand large populations rely on analyzing a smaller representative sample of a population, the Bolshoi simulation modeled a smaller

random representative volume of the universe. Specifically, it computed the evolution of a cubic volume measuring about 1 billion light-years on a side today, a volume that would contain millions of galaxies, all forming and subsequently residing in dark matter halos.

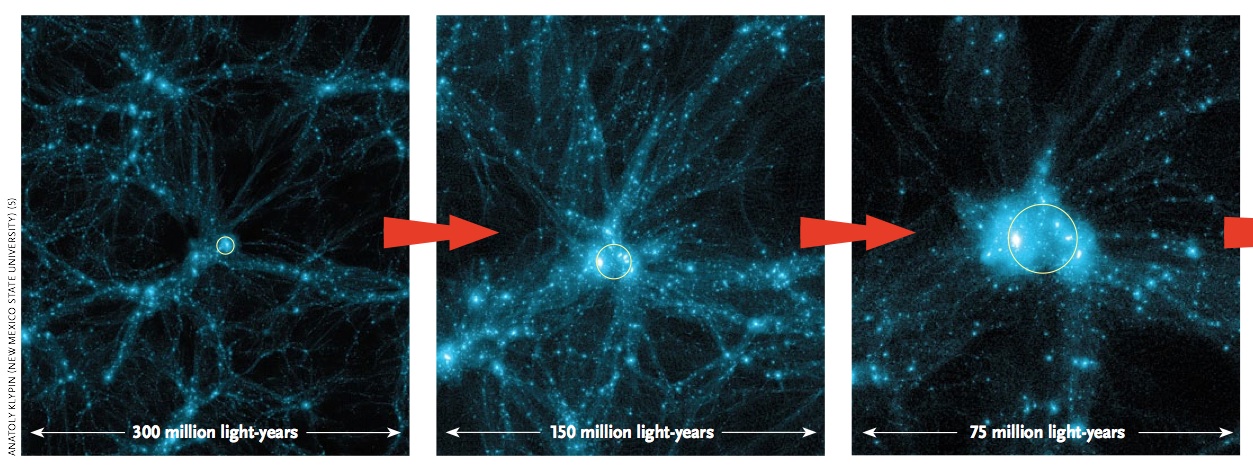

ANATOLY KLYPIN (NEW MEXICO STATE UNIVERSITY) (5)

ANATOLY KLYPIN (NEW MEXICO STATE UNIVERSITY) (5)

ZOOMING IN Bolshoi allows cosmologists to zoom in and view how matter is organized at different size scales. Bolshoi's predictions can then be com- pared to astronomical observa- tions. These boxes are all about 50 million light-years thick, but range in size (left to right) from 300 million light-years wide to only 18.75 million light-years across. The dense blobs are dark matter halos; the smallest halo in the right image would host a galaxy the size of our Milky Way.

The Bolshoi simulation was run on the Pleiades supercomputer at NASA's Ames Research Center near San Jose, California, ranked in November 2011 as the seventh fastest supercomputer in the world and the third fastest in the United States. It was a monumental effort, funded by grants from NASA and the National Science Foundation. The full Bolshoi computation took 6 million CPU hours. Its software, developed by Anatoly Klypin, was a code called Adaptive Refinement Tree (ART), which achieved a resolution of just 5,000 light-years — quite fine compared to the enormous volume simulated. For comparison, the Milky Way's visible disk is about 100,000 light-years across, and its dark matter halo is about 2 million lightyears across.

The Bolshoi simulation clock was started about 24 million years after the Big Bang. No supercomputers were needed to simulate what happened before then, because the fluctuations in cosmic density were still small, so simpler calculations could do an accurate job. The Bolshoi simulation then followed the evolution of 8.6 billion particles, all gravitationally interacting with one another. Each particle represented about 200 million solar masses (about 1/5,000th the mass of the Milky Way's dark matter halo) — including baryonic and dark matter.

During the simulated evolution of the universe, the supercomputer captured gigantic tables of numbers that correspond to three-dimensional "snapshots," equivalent to frames of a giant 3-D movie. Each snapshot contains about 400 gigabytes of data, preserving all the particles and their motions at that moment. Analyses of these snapshots — resulting in tables of the properties of all the dark matter halos at 180 different times from the Big Bang to the present, and charting how the halos at earlier times merge to form those at later times — are now acces- sible to astronomers worldwide, allowing them to explore the three-dimensional ΛCDM model of the universe and study how dark matter halos, their galaxies, and clusters of galaxies coalesced and evolved.

For example, the simplest way to associate galaxies with the dark matter halos uses a method called halo abundance matching (HAM), in which more luminous galaxies are associated with halos in which the dark matter particles are moving faster. Such an association is in accord with observations, which show that both spiral and elliptical galaxies are more massive and brighter if their constituent stars are moving faster.

Halo abundance matching allows the Bolshoi simulation to predict the likelihood of finding galaxies of various luminosities at various separations. These predictions are in excellent agreement with observations, unlike predictions based on the Millennium simulations. Moreover, the high resolution of the Bolshoi simulation allows astronomers to predict the abundance of large galaxies (such as the Milky Way) also having nearby companions as bright and as massive as the Large and Small Magel- lanic Clouds. The predictions say that about 71% of big galaxies have no such bright companions, 23% have one, 5% have two (like the Milky Way), and 1% have three or more. These predictions from the Bolshoi simulation are again in excellent agreement with observations. The success of these and other comparisons of the Bolshoi predictions with observations are confirming our general picture of how cosmic structure has evolved.

Several groups of astrophysicists around the world are working to use the full Bolshoi dark-matter-halo merging history to model the evolution of galaxies, taking into account the transformation of gas into stars, the accretion of gas by supermassive black holes, and the effects of the energy thereby released.

Astronomers observe cold dark matter not only through its effects on cosmic expansion but also through its gravitational influence on smaller scales, by measuring the speeds of stars and gas moving in galaxies or of galaxies moving in clusters, or by measuring the bending of light around galaxies and clusters. All of this evidence shows that dark matter makes up most of the mass of galaxies and clusters.

But Wait, There's More

The Bolshoi simulation, run in 2009 and analyzed in 2010-11, was the first of a suite of separate simulations run on NASA's Pleiades supercomputer.

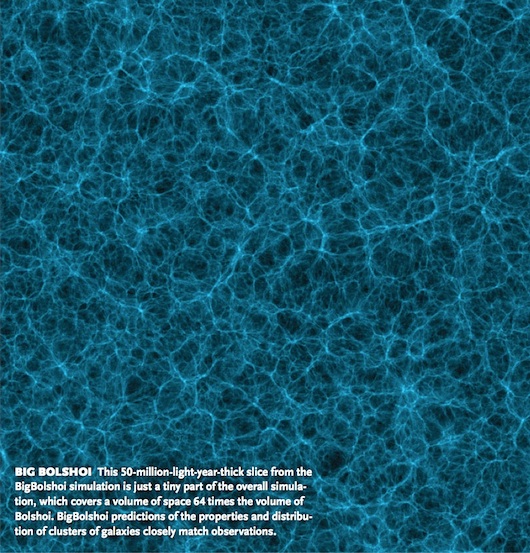

A second simulation, known as BigBolshoi (or Multi- Dark), was run in 2010 with the same number of particles, but in a volume 4 billion light-years across — four times bigger on each side than Bolshoi, and thus 64 times larger in volume. Although lower in resolution than Bol- shoi, BigBolshoi predicts the properties and distribution of galaxy clusters and other very large structures in the universe. Its results will help with projects, such as the Baryon Oscillation Spectroscopic Survey (BOSS), which are attempting to determine the properties of dark energy by measuring the universe's expansion history.

In autumn 2011, a third, higher-resolution simulation called miniBolshoi was started on Pleiades to model the formation and distribution of many small regions (a few tens of millions of light-years across) altogether containing hundreds of Milky Way-mass galaxies, with enough resolution to model the tiniest observed dwarf satellite galaxies. After improving the simulation code, we expect to finish miniBolshoi in 2012. By obtaining good statistics on the variations of galaxies' dwarf-satellite populations, we hope to shed light on why large galaxies seem to be surrounded by fewer satellite galaxies than predicted. If the predictions do not agree with observations, perhaps alternatives to the standard ΛCDM theory might be required. For example, the dark matter particles might interact with themselves rather strongly, even though they interact only gravitationally and perhaps weakly with familiar baryonic matter.

A New Experimental Science

Up to now, cosmology has been a historical and purely observational science. Like geology and paleontology, the object of historical science is to reconstruct the history of the universe, Earth, and life from evidence millions or billions of years old. A geologist or paleontologist can analyze a rock fragment or bone chip in a lab. But geology and paleontology are notoriously difficult, because our dynamic Earth destroys much earlier geological evidence. After all, few individual organisms turn into fossils, which is why we find so few fossils.

The Search for Dark Matter

Physicists expect that dark matter is some sort of elementary particle, like every other form of matter. If this particle does not interact through the strong nuclear force and is electrically neutral, it would interact with ordinary matter only weakly, like neutrinos. Such hypothetical dark matter particles are called "weakly interacting massive particles," or WIMPs.

If WIMPs are the dark matter, vast numbers of them are trav- eling right through Earth, just as neutrinos are, although WIMPs are much more massive. Many physicists are looking for these particles in super-sensitive experiments in deep underground laboratories, to shield them from cosmic rays. A few of these experiments show hints of WIMP detections. The evidence isn't convincing yet, but such experiments are rapidly increasing in sensitivity. They will either discover the WIMP or else rule out the popular candidate particles in the next few years.

It's also possible that the dark matter particle will be dis- covered indirectly. For example, in galaxy dark matter halos, WIMPs can annihilate with one another, producing high-energy gamma rays among other things (S&T: April 2009, page 22). NASA's Fermi Gamma-ray Space Telescope is looking for such gamma rays. WIMPs can perhaps be made in energetic proton collisions at the Large Hadron Collider in Switzerland, where they would escape the detectors without interacting — but the missing energy and momentum would show that they had been created. This has not yet been seen. But even if such evi- dence starts appearing as the LHC particle-collision energy is increased, that would not prove that such particles are the dark matter unless we discover through direct or indirect detection that dark matter particles have the same mass.

The race is on to discover what the universe is mostly made of. Whoever discovers the nature of dark matter will surely win the Nobel Prize. — Joel R. Primack

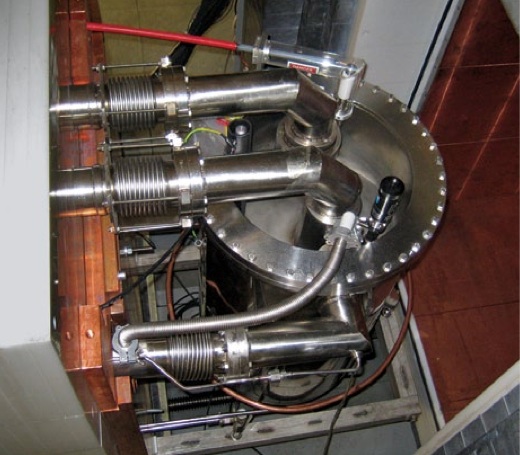

XENON COLLABORATION (2)

XENON COLLABORATION (2)THE SEARCH XENON, an international collaboration led by Columbia University physicist Elena Aprile (above), is one of several teams trying to detect dark matter directly in under- ground experiments. The team's detector is in the left photo.

To study the universe, astronomers are mostly limited to receiving and analyzing the spectrum of electromagnetic radiation emitted by objects eons ago (although they also receive information from cosmic rays and neutrinos, and probably someday gravitational waves). Almost all the radiation ever emitted at all wavelengths is still traversing the universe. Astronomers now possess three key tools to understand those "fossil" photons: great telescopes and instruments on the ground and above the atmosphere to capture those photons, sufficiently clever theories to explain what they show, and powerful supercomputer simulations to construct cosmological models based on those observations and theories.

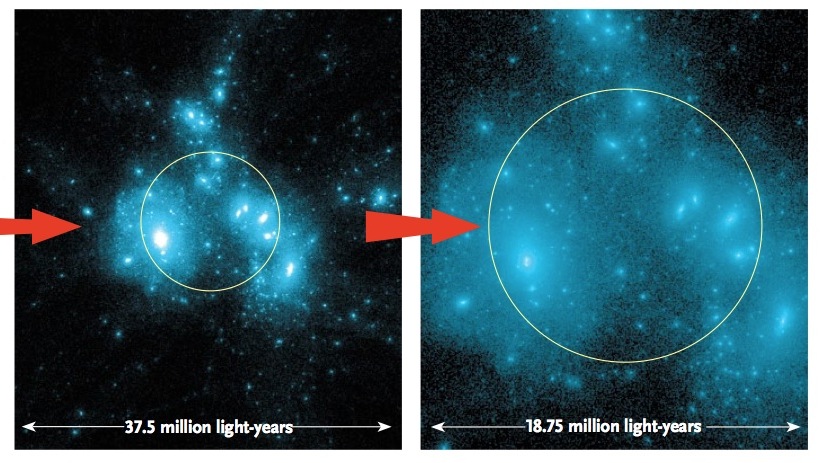

STEFAN GOTTLÖBER/ LEIBNIZ-INSTITUT FÜR ASTROPHYSIK POTSDAM

BIG BOLSHOI This 50-million-light-year-thick slice from the BigBolshoi simulation is just a tiny part of the overall simula- tion, which covers a volume of space 64 times the volume of Bolshoi. BigBolshoi predictions of the properties and distribu- tion of clusters of galaxies closely match observations.

Supercomputers are essential tools in the scientific process. Cosmologists can't literally drag a big chunk of the universe into a lab and run tests on it, so they need to use computers to perform their experiments. By re-creating how the universe evolved from a time shortly after the Big Bang, and visualizing each snapshot in detail, supercomputer modeling is allowing astronomers to explore how subtle changes in initial conditions and hypothesized physics affect subsequent cosmic evolution, and to make predictions they can test with future observations of the real universe. In essence, through cosmological simulations, astronomy is becoming an experimental science. ✦

Joel R. Primack is Distinguished Professor of Physics at the University of California, Santa Cruz, and director of the University of California High-Performance AstroComputing Center (UC-HiPACC). Primack is also coauthor with Nancy Ellen Abrams of The New Universe and the Human Future (www.new-universe.org).

____________________________________________________________________

S&T contributing editor Trudy E. Bell (www.trudyebell.com) is Senior Writer for UC-HiPACC. She is the author of 19 bylined feature articles that have captured top journalism awards, including the 2006 David N. Schramm Award of the American Astronomical Society. She has written a dozen books.